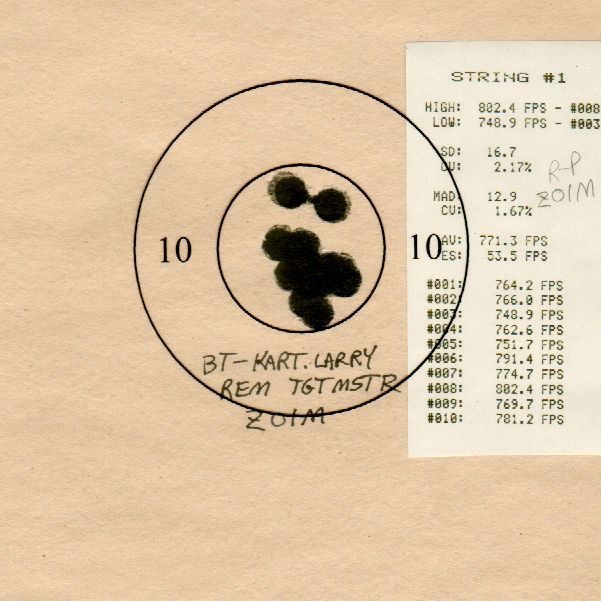

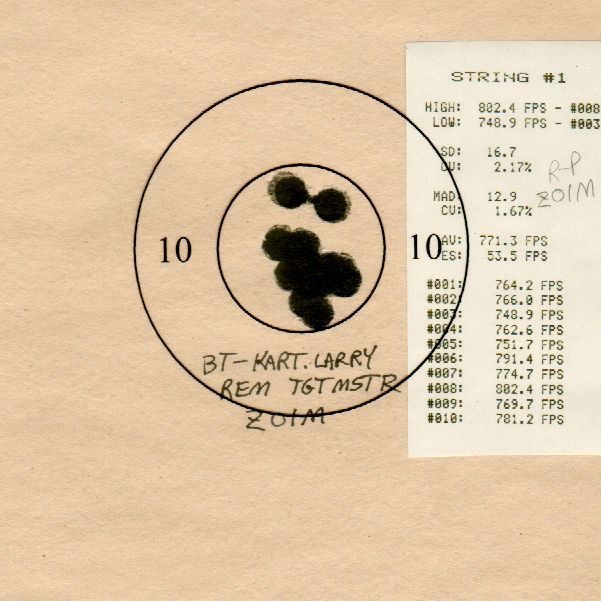

Dave Wilson, considered by many to be the dean of building, accurizing, and shooting the 32 S&W Long, recently offered to make available some test targets with accompanying load data for Alliant’s new canister powder, BE-86. My interest in this data wasn’t so much about the 32 itself, though I’d like to try one, but instead getting my hands on the target data for analysis. If you’ve been around the web watering holes where precision pistol shooters like to congregate, you’ve no doubt seen a Dave Wilson target, like so:

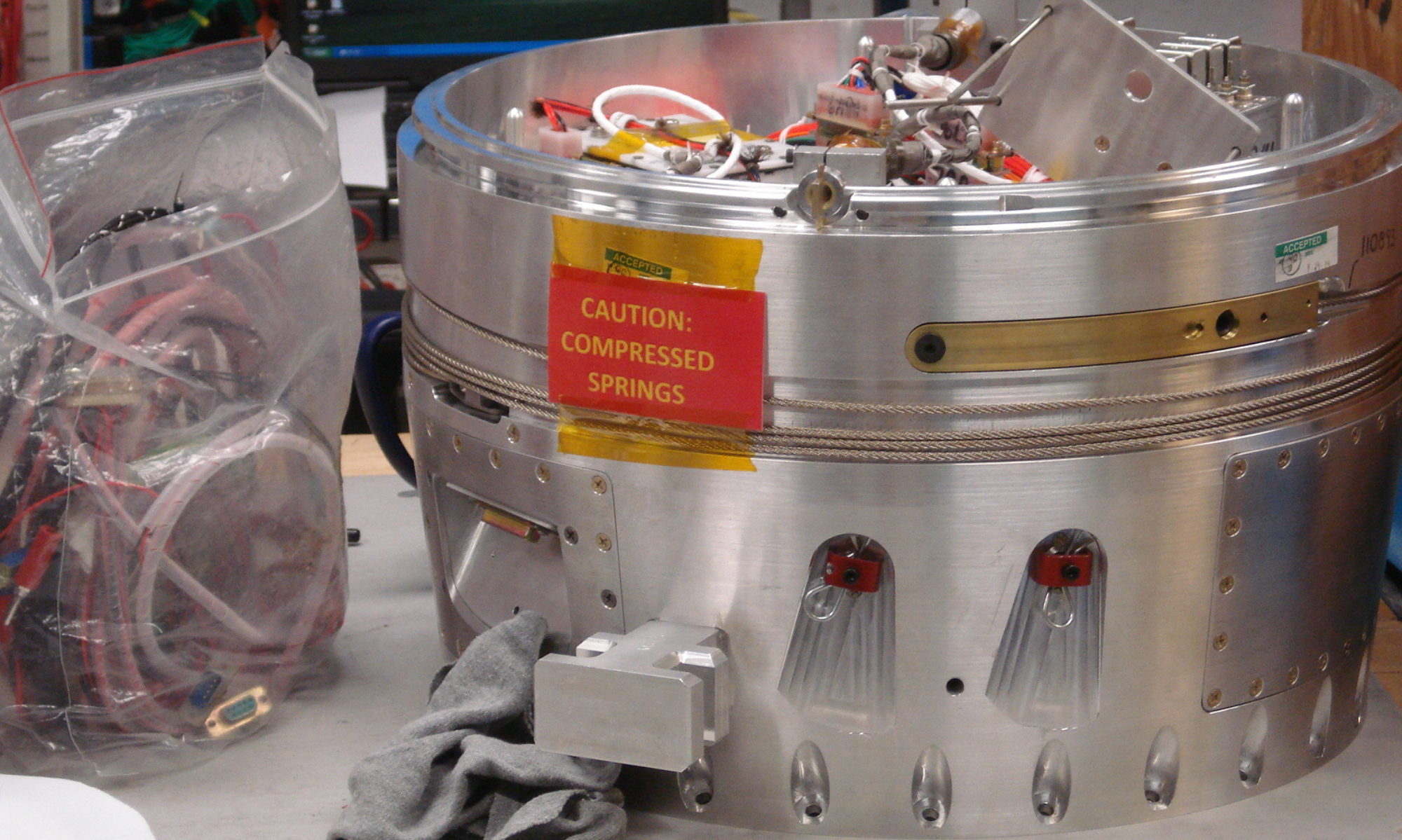

Dave has a barrel testing fixture that he uses for testing ammunition and barrels at 50 yards. He notates the targets with the load data, includes the tape print-out from the chronograph and inserts an appropriately sized bullseye 10/X-ring mask over the center of the target for group comparison. It’s really quite clever and makes analysis easy to sort and review.

Using Dave’s data is the perfect opportunity to investigate the methods and means I’d like to use for the testing I’ve been thinking and writing about here. I’m hopeful that I’ll build a template/process I can use in the future based off this exercise; feedback is encouraged to help refine the reporting, while the data sorting will help me optimize my data collection process.

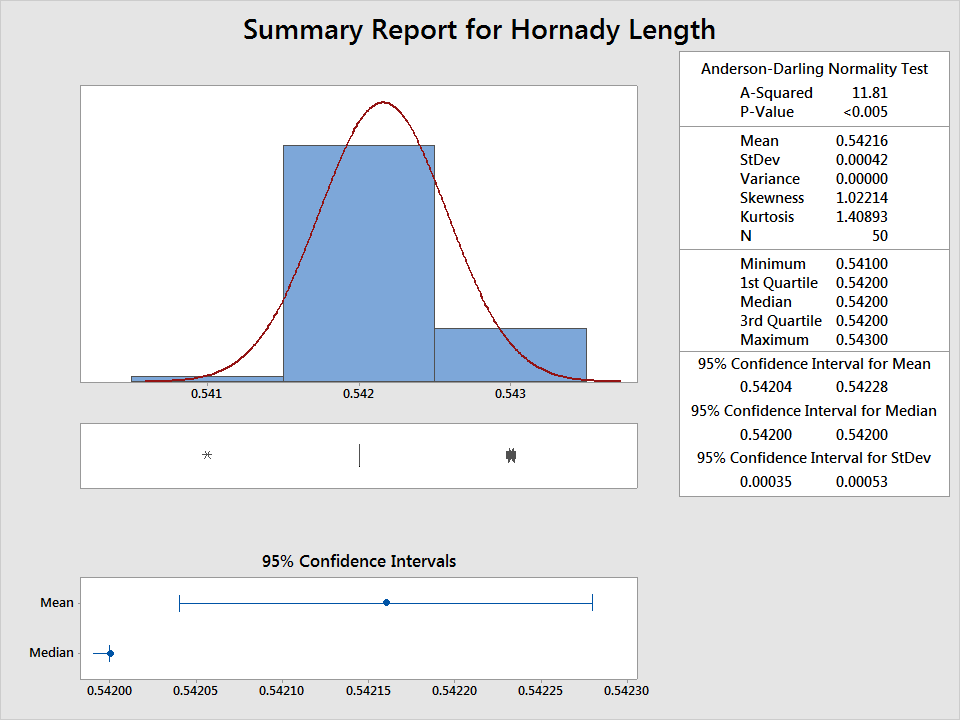

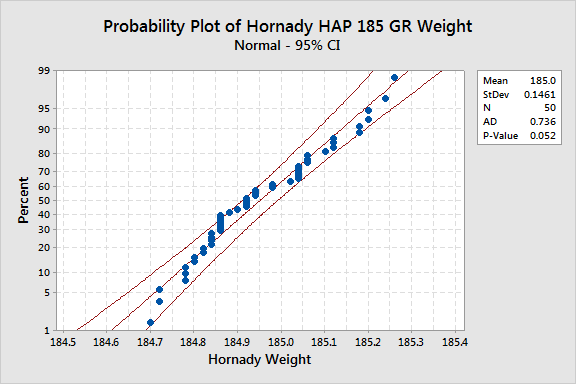

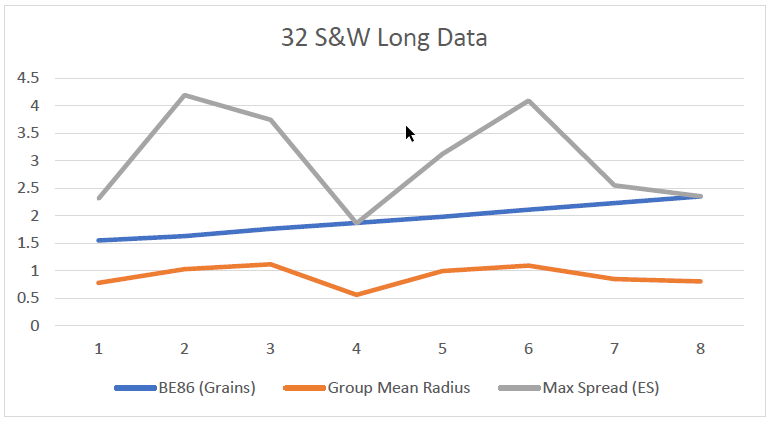

First, some facts. Dave used an iterative approach in his recent test, starting at 1.55 grains and ending at 2.35 grains. He shot ten shots per target per charge of powder. So, ten shots of 1.55 grains, ten of the next and so on. There are eight different charge iterations, for 80 shots total. I built a small table in Excel, you could use OpenOffice if you prefer, for capturing the amount of grains, the average velocity of ten shots per charge of powder (FPS), the max spread (ES), and the group mean radius. I collected the group mean radius dimensions using TargetScan, an Android/Apple application available on the Google Play/Apple marketplaces. The app costs about 10 bucks, and my experience with it, albeit limited, has warranted the cost. I printed out the targets that Dave sent over and cut out the bullet holes from one copy and transferred the bullet “holes” to a 50 yard repair center (the application expects white holes in a beige/black background to score properly) to run through the app. I’d then index the cutout bullet holes and position them to represent the next target, snap the picture, and repeat until I had all 8 targets completed. This took a little while, but really, it was free data, so I couldn’t complain.

The aggregate score of 80 shots was 795-52X (99.4% – two-tenths more than the National Record held by Hershel Anderson; this raises an interesting question, did Hershel find the theoretical limit of human performance from a probability point of view? ). The mean radius of 80 shots was .901″. I’d say the barrel/ammo/fixture can shoot.

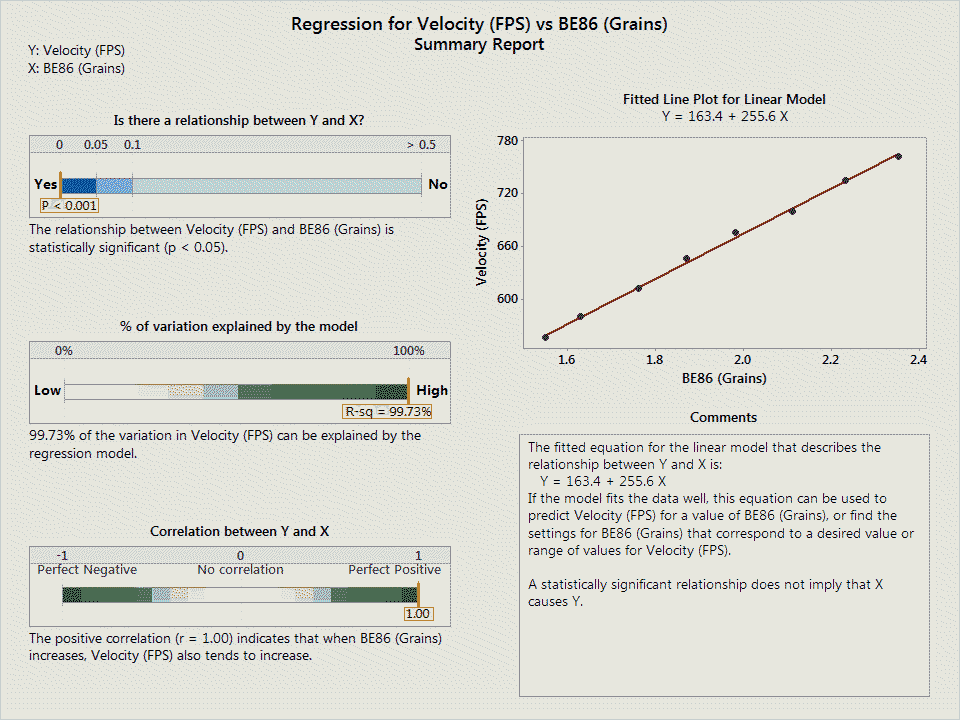

Before I continue, I need to inform you of a note of disclaimer that Minitab had to say about the sample data that I used. Minitab dutifully reports: “Your sample size (n=8) is not large enough to provide a very precise estimate of the strength of the relationship. Measures of the strength of the relationship, such as R-Squared and R-Squared (adjusted), can vary a great deal. To obtain a more precise estimate, larger samples (typically 40 or more) should be used.” With this in mind, do be aware that the conclusions I derive are based solely on the data presented, but are also limited in predictive ability due to the number of targets I had for analysis. That said, I think there is enough data here to make a few meaningful comments, but do keep the limitation of sample size in mind as you go along.

To begin, I wanted to test and see what sorts of relationships existed in the data that should be there.

- Increasing the powder charge, the faster you can go.

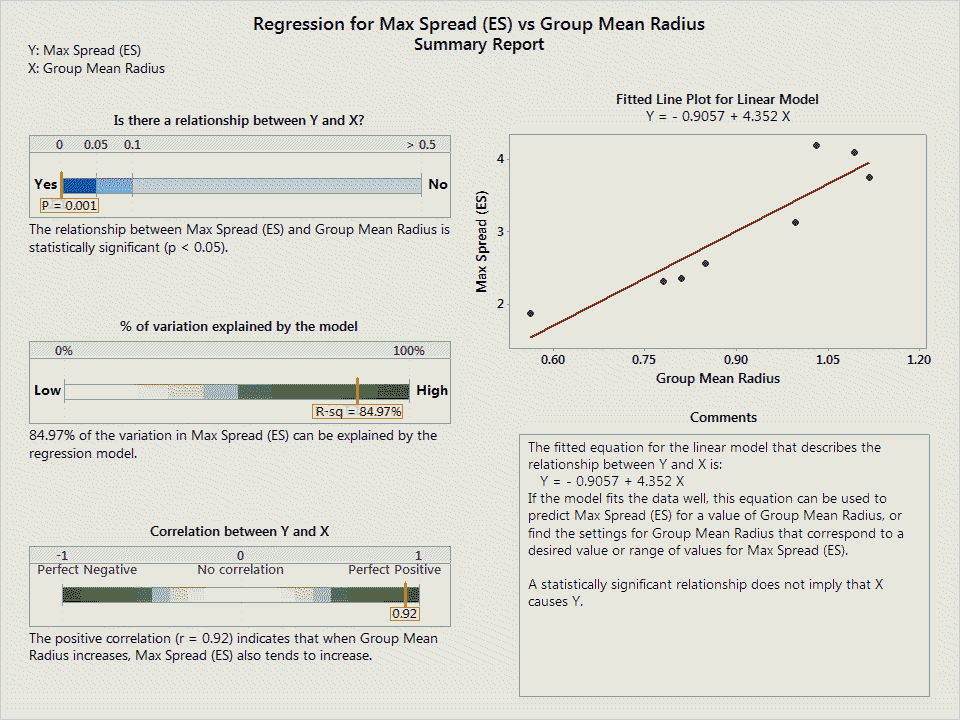

- The larger the extreme spread of a group, the larger the mean radius.

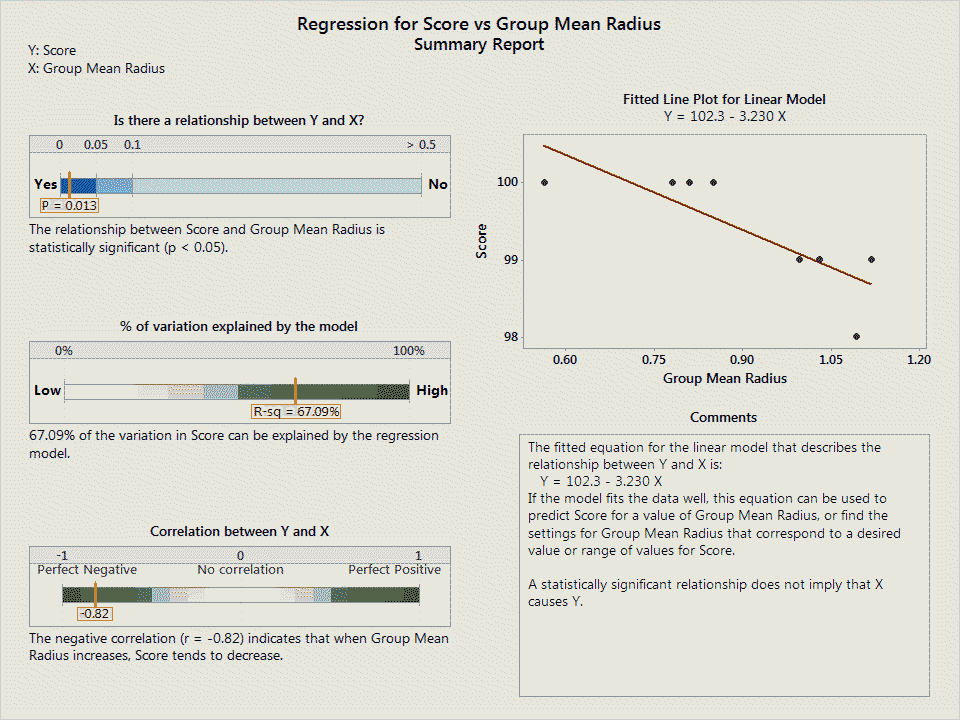

- The lower the score, the larger the mean radius.

These are intrinsic relationships that should exist in the system and are fundamental to how we think about accuracy. These are testable criteria, and any good combination of load and pistol, coupled with ideal test conditions, should demonstrate these principles.

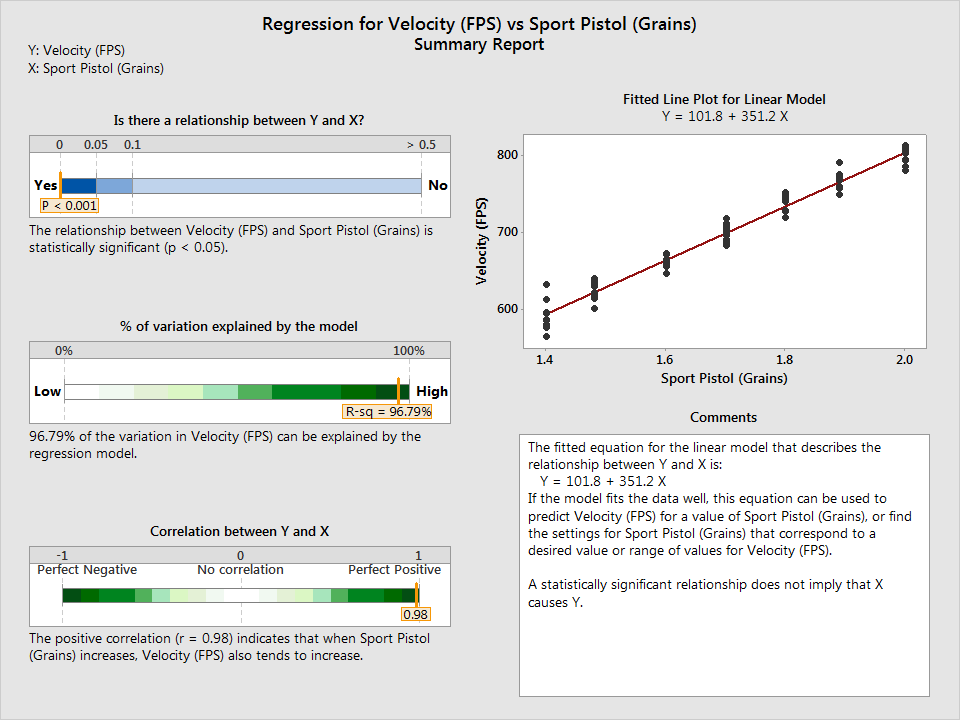

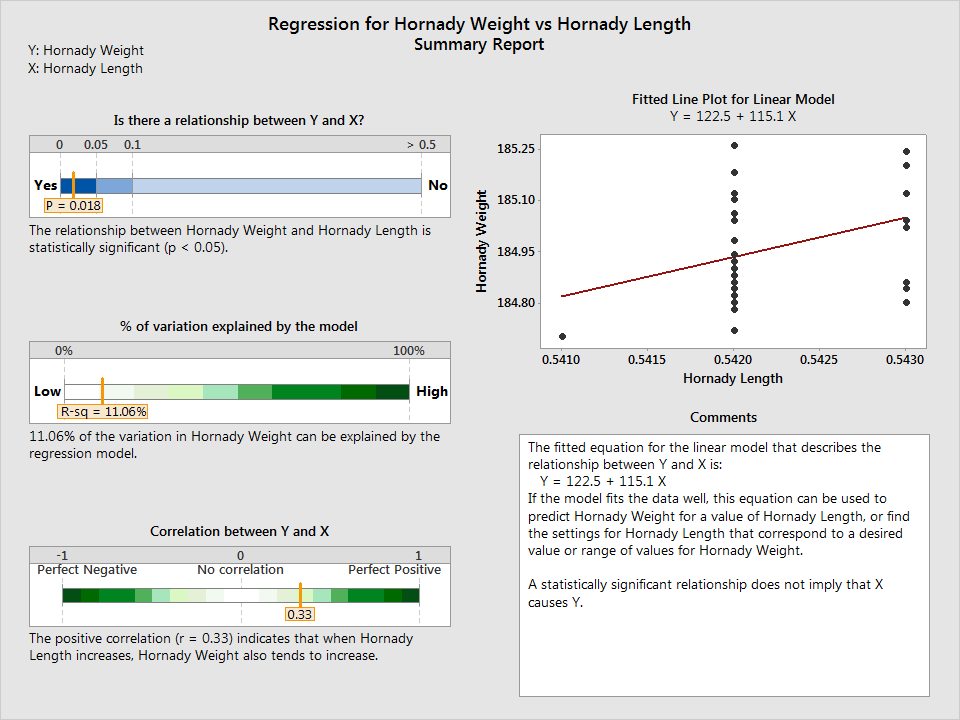

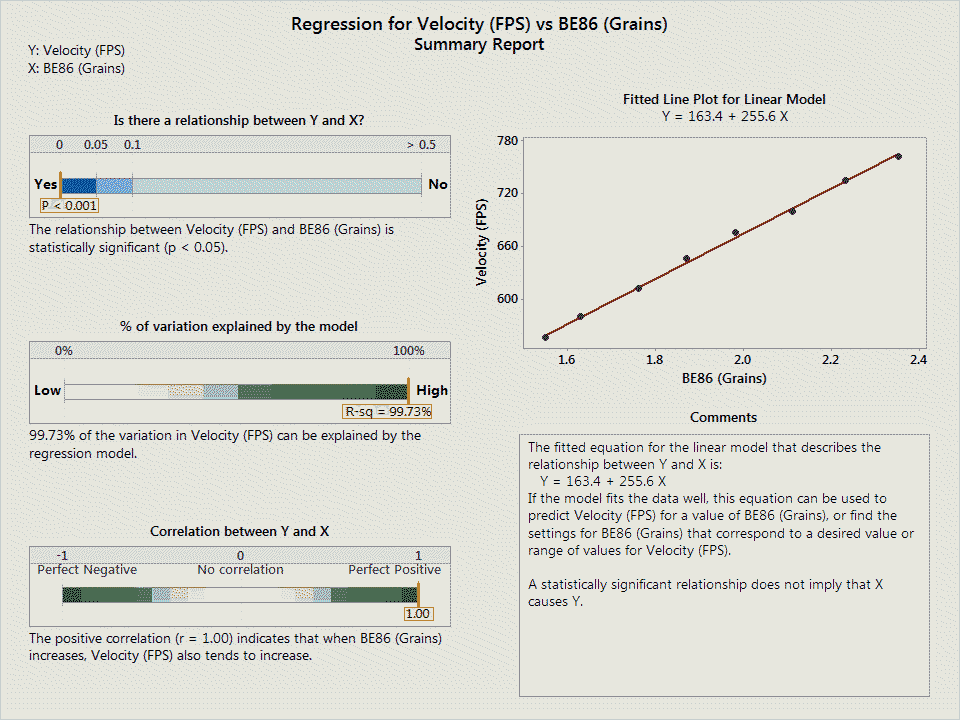

The first relationship, by increasing the powder charge, the faster you can go, is obvious. More propellant, within pressure limits of course, will always equal more velocity. I wasn’t disappointed here; the more powder used increases velocity and demonstrated the strongest relationship out of all of the regression tests performed.

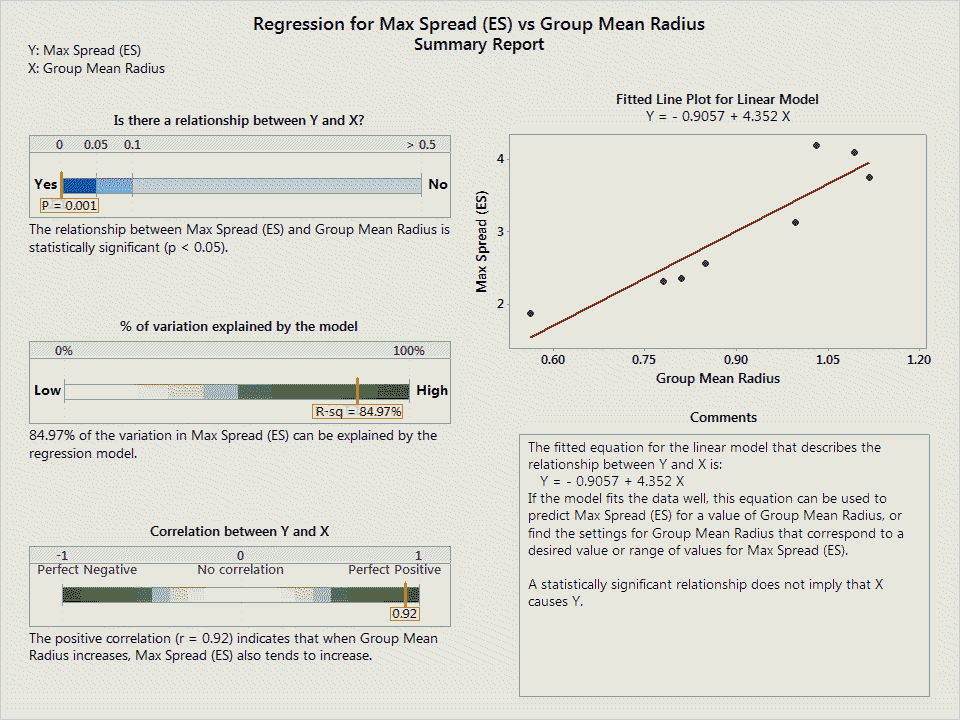

The second relationship, the larger the extreme spread, the larger the mean radius, was also strongly demonstrated in the data. These are two different methods of determining group quality; the extreme spread method of measurement relies on using the two outermost strikes to determine the group size while the mean radius method of measurement takes the average of all bullet strikes using the calculated center of the group taken into account as a point of reference. Even though these are different methods of measuring group size, they intuitively correspond to one another; if a group has a large extreme spread, it should also be the case that the mean radius should also be larger (to account for that increased spread).

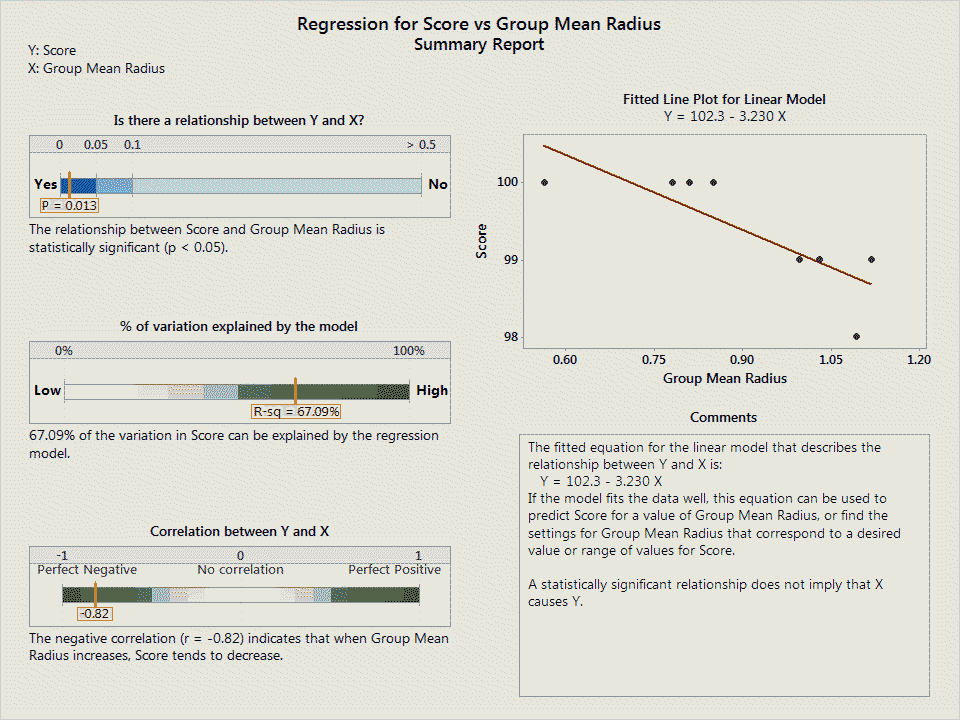

The last relationship is generally trying to capture the notion that a lower score has a larger group mean radius. That is, all things being equal, a lower score will have a larger group. Of course, you could have a theoretical group size of .452″, or the perfect, one shot group, but unless that group is located in the X or 10 ring, and instead is located in the 7 ring, it simply won’t be considered a good group due to poor scoring. Otherwise, a good group should be small and delivered to the target in the center of the scoring area for maximum quality.

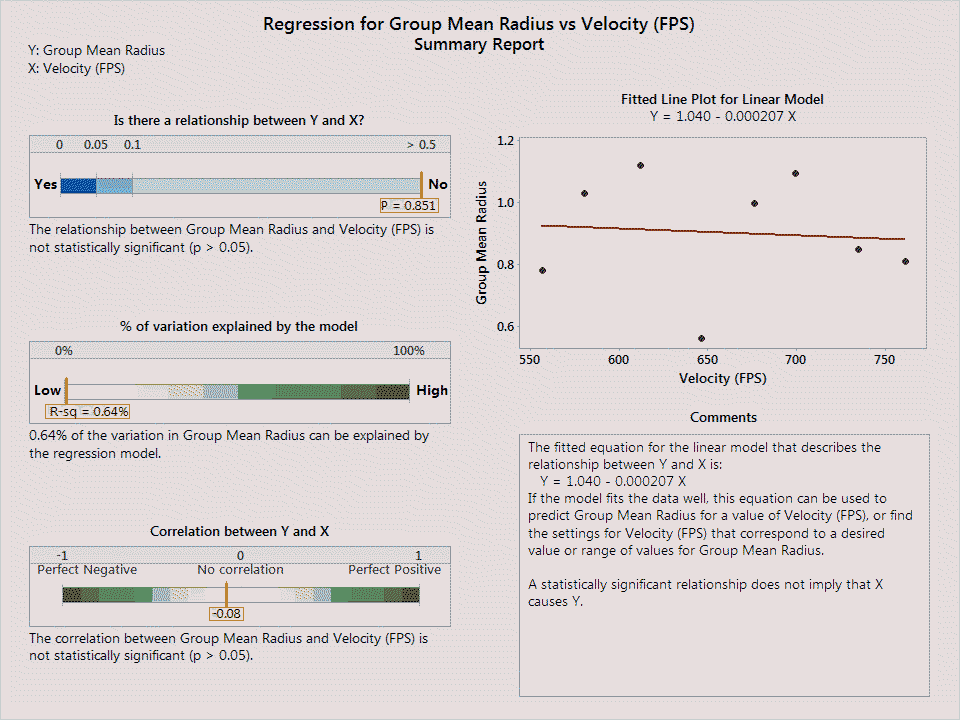

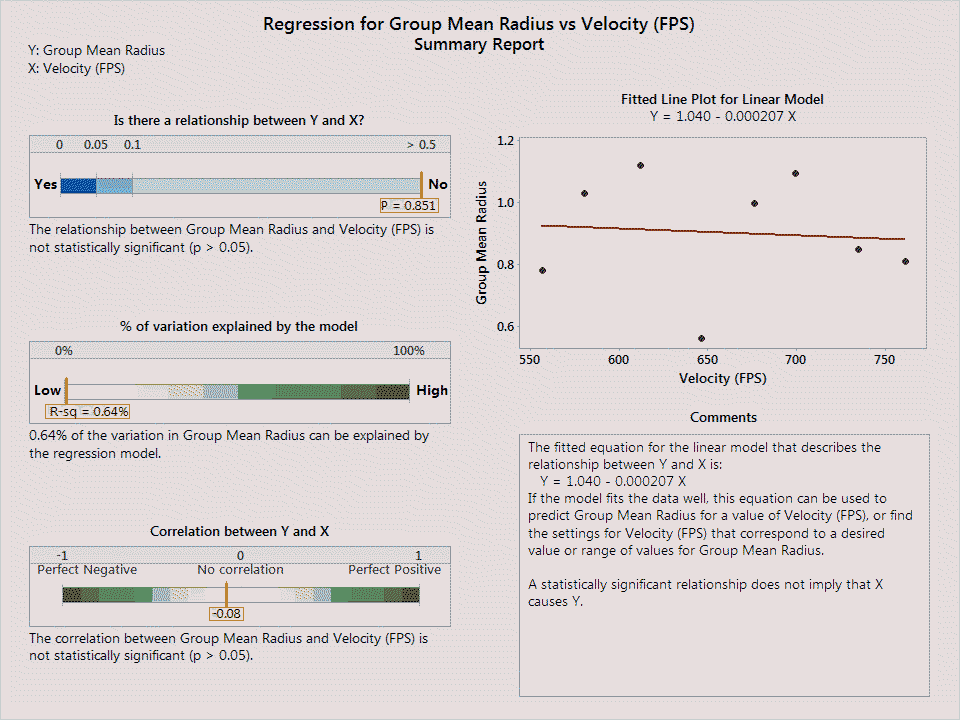

Interestingly, there is no relationship between velocity and mean radius:

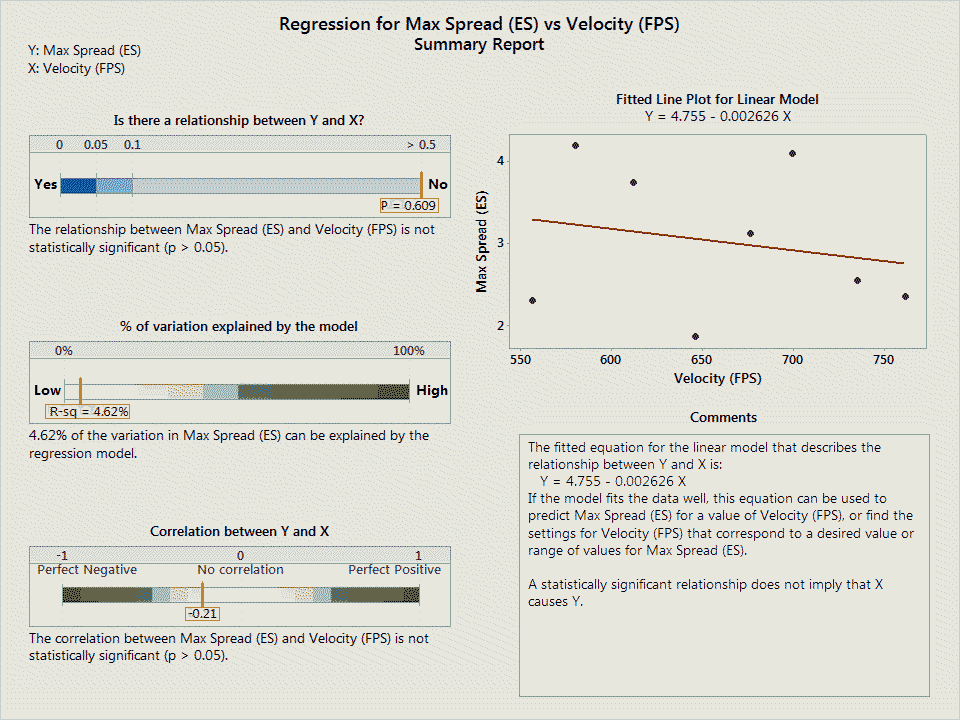

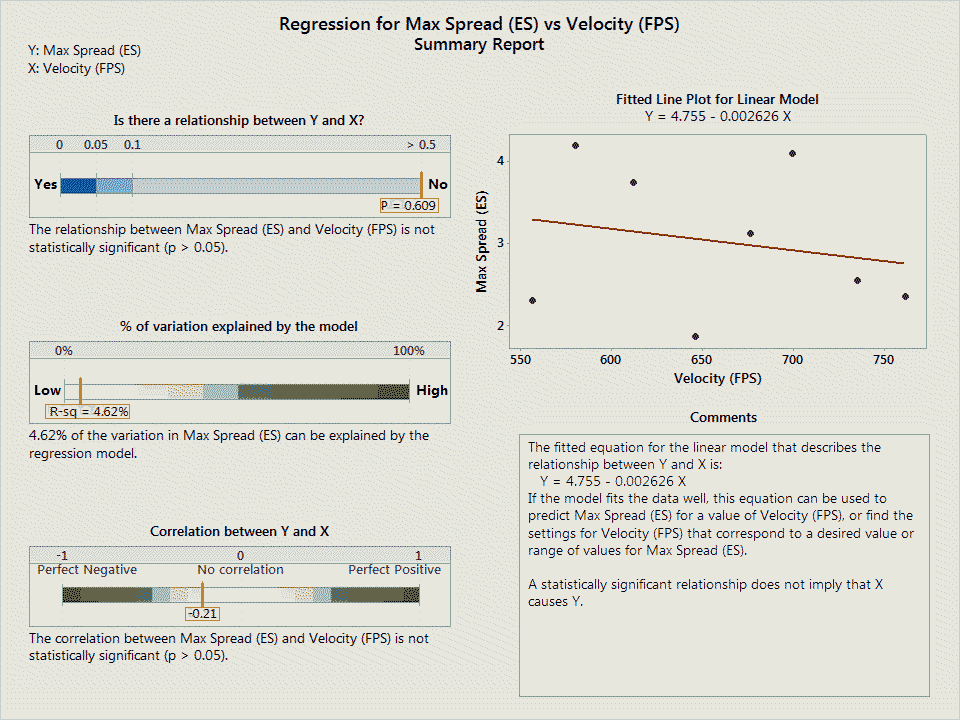

Nor does there appear to be a relationship between velocity and extreme spread:

There could be many reasons no such relationship is apparent, though exploring them presently is beyond my remit.

Where does this leave us? It’s nice to know that the data presented seems to be of good quality and makes sense, but how would we determine which particular load to pick as being “the best” or that we should explore further? I believe using the mean radius measurement is the best measure as it gives some structure to the decision making process, removes the extreme spread measure which relies on the two worst shots to tell the story, and is statistically rigorous enough that it can be used as a mark of quality. Plus, with the TargetScan application, determining the mean radius is completed as quickly as snapping a photo.

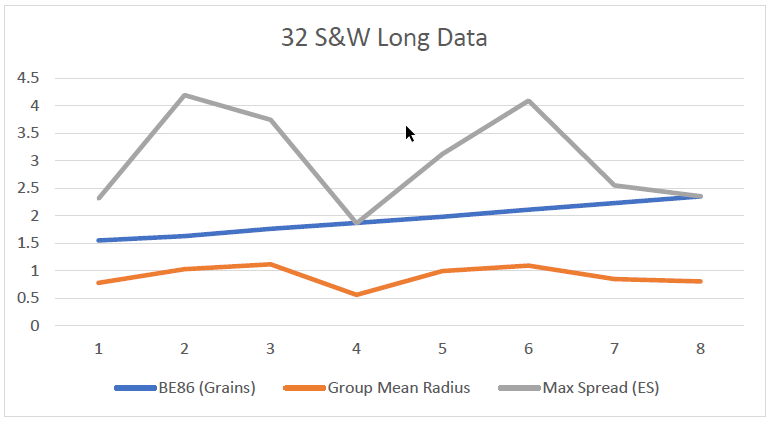

Once you collect the relevant data, you can make a simple line chart:

In this particular case, I’d further explore load number 4, as it had the lowest mean radius.

Final points:

- There does not appear to be a relationship between group size and velocity.

- The velocity data is spooky in how well it matches the modeling.

- This data starts to give one the impression of what is possible out of this kind of test fixture, though says little about offhand shooting.

- From a scoring perspective, the worst ten shot string was a 98 while the best was 100.

- The relationships one would expect are in the data.

Thanks to Dave Wilson for the data!